You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

[TAC] Stop Human Spam [Paid] 1.4.8

No permission to buy ($19.00)

- Thread starter tenants

- Start date

An issue that we encounter is accounts that make a lot of posts stuffed with keywords and after time passes they they edit all their posts to add spam links. Due to the amount of posts made, this flies under the radar. I have seen this spammer behavior on other websites as well. It seems to me that the only way to identify these spammers is to track who adds more than X links to their posts within Y days. Please add a function to identify such post edit spammers.

As we are getting a lot of SHS log entries per day: could you please add an alert when there are SHS log entries? Or display the number in the moderator bar. This will allow us to ban spammers quicker.

As we are getting a lot of SHS log entries per day: could you please add an alert when there are SHS log entries? Or display the number in the moderator bar. This will allow us to ban spammers quicker.

Last edited:

To elaborate on this: another factor here is that these spammers often copy posts elsewhere from the net. Then accumulate post count. Then they wait. Then edit posts and retrofit spam links into it.An issue that we encounter is accounts that make a lot of posts stuffed with keywords and after time passes they they edit all their posts to add spam links. Due to the amount of posts made, this flies under the radar. I have seen this spammer behavior on other websites as well. It seems to me that the only way to identify these spammers is to track who adds more than X links to their posts within Y days. Please add a function to identify such post edit spammers.

We do limit new members editing, but the problem is that this type of spamming happens after many posts. A few of which will get positive ratings and therefore a usergroup promotion.Not so much for spammers but to prevent malicious or petulant editing we limit editing time to 10 mins or 30 mins depending on user group.

A simple thing we use (and I'm not sure if it could be incorporated into this add-on?) is to check the timezone of the user account [which XF seems to get from the device used to register] vs the timezone of the posting IP address; this highlights when Asian spammers are using US / European proxies.

I've had fbhp turned off on one of my forums for a while (testing something), and seen somethings hit the site.

One of them was a spammer that posted in non forum related languages (Chinese / Arabic / Russian ... etc) . Obviously no links got through, but non link content did

So, I'm looking to add some sort of character check

If the post contains more than x letters in Chinese / other ... block (optional to what languages you choose obviously)

One of them was a spammer that posted in non forum related languages (Chinese / Arabic / Russian ... etc) . Obviously no links got through, but non link content did

So, I'm looking to add some sort of character check

If the post contains more than x letters in Chinese / other ... block (optional to what languages you choose obviously)

Last edited:

tenants updated StopHumanSpam - Anti Human Spam with a new update entry:

Detect Character Types

Read the rest of this update entry...

Detect Character Types

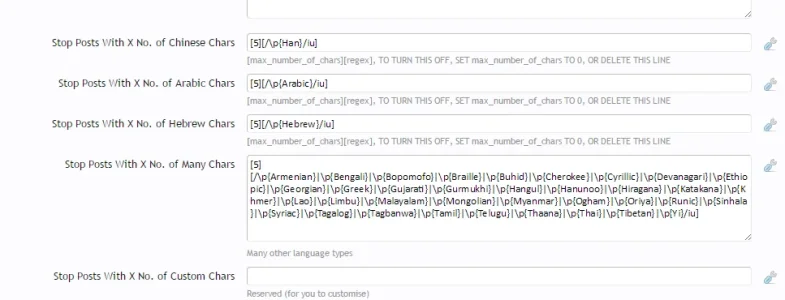

We now detect multiple character types, if there are more than x characters in this character tpye (the default is 5) the post is blocked, character types detected (you can customise this):

- Arabic

- Armenian

- Bengali

- Bopomofo

- Braille

- Buhid

- Cherokee

- Cyrillic

- Devanagari

- Ethiopic

- Georgian

- Greek

- Gujarati

- Gurmukhi

- Han

- Hangul

- Hanunoo

- Hebrew

- Hiragana

- Inherited

- Kannada

- Katakana

- Khmer

- Lao

- Limbu

- Malayalam

- Mongolian...

Read the rest of this update entry...

This version stops posts with no relevant Unicode character types (Russian / Chinese, etc)

Obviously you can set the values to 0 to turn each one off

..in addition, I've made that link clickable

Obviously you can set the values to 0 to turn each one off

Could you please make the thread link in the report clickable? I use it every day. Its a drag to have to paste it in the browser and type the rest of the url.

..in addition, I've made that link clickable

Last edited:

How many spammers have you seen posting in the Cherokee language?

uhhhhmmm.... millions, these tribes must sit there all day spamming on their laptops!

Yeah, I just banged the entire lot into regex. I don't want to feel like I'm picking on Russia / China, although they are generally the character types I see spamming

Anyway, you can cherry pick and remove / add / change rules:

tenants updated StopHumanSpam - Anti Human Spam with a new update entry:

added Russia to the character type detection

Read the rest of this update entry...

added Russia to the character type detection

Kind of an important one to leave out.... added it back in (see previous update)

Read the rest of this update entry...

A simple thing we use (and I'm not sure if it could be incorporated into this add-on?) is to check the timezone of the user account [which XF seems to get from the device used to register] vs the timezone of the posting IP address; this highlights when Asian spammers are using US / European proxies.

Too risky, there's a good chance of false positives (using your account when you are abroad)

I guess they could be put into moderation,

Last edited:

tenants updated StopHumanSpam - Anti Human Spam with a new update entry:

bug fix - unknown index 'moderate_replies'

Read the rest of this update entry...

bug fix - unknown index 'moderate_replies'

This bug has possibly been in there for a couple of versions, I'm surprised no one pointed it out

... fixed an error logging issue when forums are non moderated forums

Read the rest of this update entry...

@tenants as requested, moved to the proper thread. apologies for getting going in the foolbot thread. in regards to the spam management options, you recommend having nothing in the user agent and spam phrases sections? how about the stop forum spam or akismet options? those are the only other things i have filled in.

Thanks @Rambro

I recommended not putting .net and http in the core spam management spam phrases, because your site is as www.example.net, and as you had seen, people uploading images or posting internal links were getting stopped (not due to your stophumanspam settings, but due to the settings you added to the core spam phrases)

I'm not sure what user agent settings you are referring to (there are none in any of the TAC products), user_agent (this defines the Browser and Operating System) is a header value and always faked by bots, and has no relation to spam activity. I actually log it for this (and other) reason, to show that it is a useless way of measuring any type of spam, and has been for many years.

Any of the TAC products can work with any API as part of the core, or included through anyApi. But, API's (some more than others) are notorious for producing false positives, if you have multiple API's doing the same thing, it is unlikely that you will catch more spammers than using just one / two. But it is likely you will decrease user experience (slow API requests) and catch more false positives

...I am not against APIs, but I am against overkill of APIs, there is no need or advantage, the only reason I use more than 2 on one of my forums is to be able to show a comparison, for instance:

You'll see that there is very little point in using FSpamList with StopForumSpam

StopForumSpam already catches the majority of bots, it never reliably catches 100% of the bots that FBHP does (no API will, since sometimes the IPs are fresh proxies), but it gets quite a lot

If you use an API that gets 99% of bots, and then find another that gets 99% of bots, it does not mean you will now catch 99.99 % of bots, it is more likely that both API's cover the same data (thus you gain nothing, other than a fall back for when one is down).

If you use an API that catches a low %, there really is very little point, there is no advantage.

As for akismet, it's not an API that I've studied, maybe some one else has some insight?

I recommended not putting .net and http in the core spam management spam phrases, because your site is as www.example.net, and as you had seen, people uploading images or posting internal links were getting stopped (not due to your stophumanspam settings, but due to the settings you added to the core spam phrases)

I'm not sure what user agent settings you are referring to (there are none in any of the TAC products), user_agent (this defines the Browser and Operating System) is a header value and always faked by bots, and has no relation to spam activity. I actually log it for this (and other) reason, to show that it is a useless way of measuring any type of spam, and has been for many years.

Any of the TAC products can work with any API as part of the core, or included through anyApi. But, API's (some more than others) are notorious for producing false positives, if you have multiple API's doing the same thing, it is unlikely that you will catch more spammers than using just one / two. But it is likely you will decrease user experience (slow API requests) and catch more false positives

...I am not against APIs, but I am against overkill of APIs, there is no need or advantage, the only reason I use more than 2 on one of my forums is to be able to show a comparison, for instance:

You'll see that there is very little point in using FSpamList with StopForumSpam

StopForumSpam already catches the majority of bots, it never reliably catches 100% of the bots that FBHP does (no API will, since sometimes the IPs are fresh proxies), but it gets quite a lot

If you use an API that gets 99% of bots, and then find another that gets 99% of bots, it does not mean you will now catch 99.99 % of bots, it is more likely that both API's cover the same data (thus you gain nothing, other than a fall back for when one is down).

If you use an API that catches a low %, there really is very little point, there is no advantage.

As for akismet, it's not an API that I've studied, maybe some one else has some insight?

Last edited:

tenants thanks for the advice. i turned off fspamlist and spambuster for now since they seem repetitive and removed stopforumspam and project honey pot from the default xenforo options. is it an issue to keep the rest of the phrases in the spam management -> spam phrases or should i leave it completely blank?Thanks @Rambro

I recommended not putting .net and http in the core spam management spam phrases, because your site is as www.example.net, and as you had seen, people uploading images or posting internal links were getting stopped (not due to your stophumanspam settings, but due to the settings you added to the core spam phrases)

I'm not sure what user agent settings you are referring to (there are none in any of the TAC products), user_agent (this defines the Browser and Operating System) is a header value and always faked by bots, and has no relation to spam activity. I actually log it for this (and other) reason, to show that it is a useless way of measuring any type of spam, and has been for many years.

Any of the TAC products can work with any API as part of the core, or included through anyApi. But, API's (some more than others) are notorious for producing false positives, if you have multiple API's doing the same thing, it is unlikely that you will catch more spammers than using just one / two. But it is likely you will decrease user experience (slow API requests) and catch more false positives

...I am not against APIs, but I am against overkill of APIs, there is no need or advantage, the only reason I use more than 2 on one of my forums is to be able to show a comparison, for instance:

You'll see that there is very little point in using FSpamList with StopHumanSpam

StopHumanSpam already catches the majority of bots, it never reliably catches 100% of the bots that FBHP does (no API will, since sometimes the IPs are fresh proxies), but it gets quite a lot

If you use an API that gets 99% of bots, and then find another that gets 99% of bots, it does not mean you will now catch 99.99 % of bots, it is more likely that both API's cover the same data (thus you gain nothing, other than a fall back for when one is down).

If you use an API that catches a low %, there really is very little point, there is no advantage.

As for akismet, it's not an API that I've studied, maybe some one else has some insight?

question for you on stop human spam - i had a user get flagged for posting about tramadol usage. is there some setting to let established users bypass the word filter?

Similar threads

- Replies

- 71

- Views

- 7K