Thought I'd share my experience of migrating my hosting/server environment, in case anyone is thinking same or needs inspiring.

Background: Been with the same (great) hosting provider for ~8yrs, upping to higher spec server models twice in that time. My audience is almost wholly Australia based, but server hosting is/was West Coast USA due to cheaper pricing and greater bandwidth allowance (certainly the case 8+ years ago).

Goal: Save money, whilst still maintaining/improving site speed/experience, and better utilise technology/infrastructure for growth and easy/quick storage (attachments) expansion. Running out of storage space being my imperative for reviewing options and change, https://xenforo.com/community/threads/what-to-do-with-growing-attachments-total-size.198695/

XF Site: 5.0GB database, 110GB storage, 2.8 million posts, 60k members, ~40k visitors/mth, ~1.8TB transfer/mth, Community forum active for 18 years.

Current Hosting: A single dedicated server; Intel e3 1270v3, 16GB memory, 2 x 480GB SSD storage, 20TB transfer. $115 /mth $USD

Services: NGINX webserver & php7.4-fpm assigned/tuned for max. 4GB memory, MySQL assigned 8GB memory, redis cache, elasticsearch assigned 1GB memory.

( The server was also used to host some other static and wordpress websites, unrelated to primary XF site/community )

My current hosting company offers dedicated servers only; no VPSs, no attachable block storage, no object storage, no firewall/DNS etc. I use external DNS, CDN, and backup targets/services.

After some exploring of options and pricing, and discussing experience with current customers, I chose https://www.linode.com/ for their Australia based data centre ( 11 total, worldwide ), product offering, support, and pricing. Using coupon code marketplace100 during sign-up gave me $100 credit for 60 days, to utilise and performance test their services to ensure it suited. And with the remaining credit balance, I'll effectively get the first month free

New Hosting: 3 x Shared/VPS servers ( 2 x 2GB memory 50GB storage 2TB transfer/mth, 1 x 4GB memory 80GB storage 4TB transfer/mth ), 140GB in attached block storage, edge firewall service, private VLAN for communication (free transfer) between the servers, and external transfer TB is pooled giving me 8TB/mth total. $60 /mth $USD ( almost half what I was paying )

)

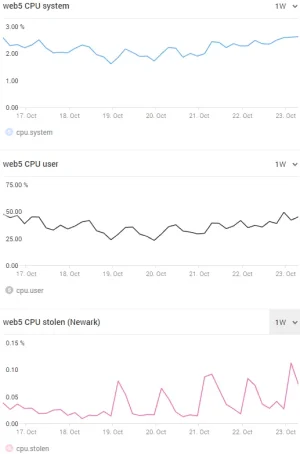

Usage: 1 x 2GB server for ElasticSearch, 1 x 2GB server for web/php, 1 x 4GB server for MySQL and redis cache

( I also have a couple of further Linode servers/nodes for the static and wordpress websites unrelated to primary XF site/community. $10/mth total )

Pro's:

How can XF help in future?

Background: Been with the same (great) hosting provider for ~8yrs, upping to higher spec server models twice in that time. My audience is almost wholly Australia based, but server hosting is/was West Coast USA due to cheaper pricing and greater bandwidth allowance (certainly the case 8+ years ago).

Goal: Save money, whilst still maintaining/improving site speed/experience, and better utilise technology/infrastructure for growth and easy/quick storage (attachments) expansion. Running out of storage space being my imperative for reviewing options and change, https://xenforo.com/community/threads/what-to-do-with-growing-attachments-total-size.198695/

XF Site: 5.0GB database, 110GB storage, 2.8 million posts, 60k members, ~40k visitors/mth, ~1.8TB transfer/mth, Community forum active for 18 years.

Current Hosting: A single dedicated server; Intel e3 1270v3, 16GB memory, 2 x 480GB SSD storage, 20TB transfer. $115 /mth $USD

Services: NGINX webserver & php7.4-fpm assigned/tuned for max. 4GB memory, MySQL assigned 8GB memory, redis cache, elasticsearch assigned 1GB memory.

( The server was also used to host some other static and wordpress websites, unrelated to primary XF site/community )

My current hosting company offers dedicated servers only; no VPSs, no attachable block storage, no object storage, no firewall/DNS etc. I use external DNS, CDN, and backup targets/services.

After some exploring of options and pricing, and discussing experience with current customers, I chose https://www.linode.com/ for their Australia based data centre ( 11 total, worldwide ), product offering, support, and pricing. Using coupon code marketplace100 during sign-up gave me $100 credit for 60 days, to utilise and performance test their services to ensure it suited. And with the remaining credit balance, I'll effectively get the first month free

New Hosting: 3 x Shared/VPS servers ( 2 x 2GB memory 50GB storage 2TB transfer/mth, 1 x 4GB memory 80GB storage 4TB transfer/mth ), 140GB in attached block storage, edge firewall service, private VLAN for communication (free transfer) between the servers, and external transfer TB is pooled giving me 8TB/mth total. $60 /mth $USD ( almost half what I was paying

Usage: 1 x 2GB server for ElasticSearch, 1 x 2GB server for web/php, 1 x 4GB server for MySQL and redis cache

( I also have a couple of further Linode servers/nodes for the static and wordpress websites unrelated to primary XF site/community. $10/mth total )

Pro's:

- Australia data centre, brings user latency to ~25ms instead of ~160ms. ( users can feel the site being slightly 'snappier' )

- Attachable block storage, charged per GB, for quick and easy growth in attachments

- Pooling of external TB transfer, so no limit from individual server

- Edge firewall service, so you don't have to have a local server firewall using server resources/load

- Private VLAN for free traffic between the servers

- Cost saving of almost HALF of what I was previously paying

- A terrific and very usable/friendly 'dashboard' for setup/configuration/management of your services

- Attachable block storage can only be connected to one server at a time

- Load balancer service, because of the above, with multiple web/php servers is complex/risky to utilise

How can XF help in future?

- XF's object storage functionality feels risky and unsupported, so I didn't want to utilise and rely upon it. Had I felt different, I'd consider object storage for attachments instead of attachable block storage, and use the object storage for CDN purposes too.

- Consider code_cache and image_cache etc. for multiple web server and load balancer scenarios. Will make zero downtime and easier upgrades (server or XF) possible. Load balancers and multiple web/XF servers are commonly available hosting options nowadays.