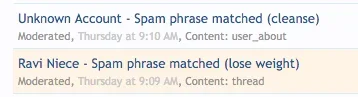

You may be aware of services like Amazon Mechanical Turk or Microworkers. These are crowdsourcing services that allow vendors to pay small amounts of money for the completion of tasks. These tasks often range from things like helping Google and Bing rank search results (human experience), and completing surveys. However, as I discovered today, spammers are using these systems on a massive scale to recruit human spammers.

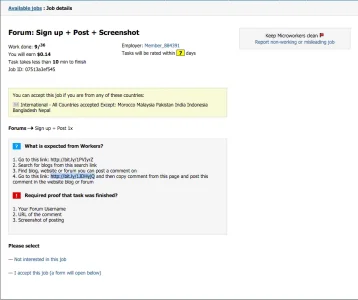

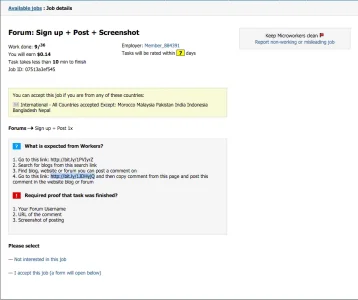

A typical example of spam recruitment jobs:

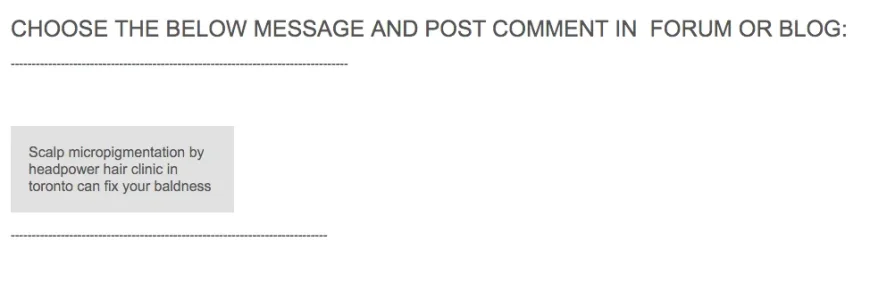

Step one will link you to a google search results page for "smp toronto", which is the keyword the spammer is targeting. You're then asked to find blogs or forums to post a spam comment on within those keywords, when you do so you'll visit the second link and see this:

Look familiar? This is the same type of stuff that bots post in forums all the time. Now rather than paying for an xRumer license, spammers can pay less than 25 cents per human-generated spam comment. This type of operation is particularly challenging to defend against via automated means. CAPTCHA is obviously completely useless, since you're dealing with a 100% human operation. Checks on registration are useless, because these are regular users who have probably not engaged in malicious activity anymore. You're left with keyword filtering, and services like Akismet. Any suggestions on how to deal with this type of spam? I'm looking into having Trust+ checks ran at post time, and the ability to detect spam patterns currently.

After looking through these types of jobs, I have also seen solicitations to post a "one hundred word product review" on a random forum, and of course this pays higher because the user needs to come up with the text. They then request the user post a link back to their site. Even spam detection services that work on patterns may not be entirely effective against this type of spammer.

A typical example of spam recruitment jobs:

Step one will link you to a google search results page for "smp toronto", which is the keyword the spammer is targeting. You're then asked to find blogs or forums to post a spam comment on within those keywords, when you do so you'll visit the second link and see this:

Look familiar? This is the same type of stuff that bots post in forums all the time. Now rather than paying for an xRumer license, spammers can pay less than 25 cents per human-generated spam comment. This type of operation is particularly challenging to defend against via automated means. CAPTCHA is obviously completely useless, since you're dealing with a 100% human operation. Checks on registration are useless, because these are regular users who have probably not engaged in malicious activity anymore. You're left with keyword filtering, and services like Akismet. Any suggestions on how to deal with this type of spam? I'm looking into having Trust+ checks ran at post time, and the ability to detect spam patterns currently.

After looking through these types of jobs, I have also seen solicitations to post a "one hundred word product review" on a random forum, and of course this pays higher because the user needs to come up with the text. They then request the user post a link back to their site. Even spam detection services that work on patterns may not be entirely effective against this type of spammer.