You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Using DigitalOcean Spaces or Amazon S3 for file storage

No permission to download

- Thread starter Chris D

- Start date

Cloudflare R2 is in beta while Bunny Edge Storage should compatible with S3 API later. We now have more choice for Object Storage.

bunny.net

bunny.net

Edge Storage is now SFTP compatible! S3 is coming next!

We have recently started our global expansion plan for Edge Storage with two new regions in London and Stockholm. With just a few clicks, you can now automatically upload and instantly replicate files to up to 7 global datacenters to deliver unparalleled performance, reliability, and solve...

Any plans to update the S3 plugin to not use

Adding onto this, looks like the aws package included in the addon for Flysystem has some issues using ListObjectsV2, and has not been implemented. To modernize, another package may be needed.

Current use case with Cloudflare R2 means we could not connect it to their platform because they are not implementing deprecated functionality

ListObjects? It is currently deprecated and has been replaced with ListObjectsV2.Adding onto this, looks like the aws package included in the addon for Flysystem has some issues using ListObjectsV2, and has not been implemented. To modernize, another package may be needed.

Current use case with Cloudflare R2 means we could not connect it to their platform because they are not implementing deprecated functionality

Last edited:

Not that I've looked - Flysystem uses the official PHP AWS SDK, what issues are you seeing?Adding onto this, looks like the aws package included in the addon for Flysystem has some issues using ListObjectsV2

Not that I've looked - Flysystem uses the official PHP AWS SDK, what issues are you seeing?

ListObjects as used by Flysystem is deprecated on modern S3 Compatible Providers (Like Cloudflare R2).

Code:

Aws\S3\Exception\S3Exception: Error executing "ListObjects" on "https://<Private Bucket URL>.r2.cloudflarestorage.com/?prefix=<bucket-name>%2Fattachments%2F234%2F234839-1682772c8adaf7dd6149be543447c891.jpg%2F&max-keys=1&encoding-type=url"; AWS HTTP error: Server error: `GET https://{..}.r2.cloudflarestorage.com/?prefix={..}%2Fattachments%2F234%2F234839-1682772c8adaf7dd6149be543447c891.jpg%2F&max-keys=1&encoding-type=url` resulted in a `501 Not Implemented` response: <Error><Code>NotImplemented</Code><Message>ListBuckets search parameter encoding-type not implemented</Message></Error> NotImplemented (server): ListBuckets search parameter encoding-type not implemented - <Error><Code>NotImplemented</Code><Message>ListBuckets search parameter encoding-type not implemented</Message></Error>To continue supporting S3 in the future, we will need to eventually have this plugin updated to utilize

ListObjectsV2. As noted on Cloudflare R2's docs, they have no intention of adding deprecated functions.This isn't something that XenForo should fix, at least not yet. The issue lies with the underlying third party FlySystem library. A PR exists to fix this issue (https://github.com/thephpleague/flysystem-aws-s3-v3/pull/298) and when that gets merged and incorporarted, then yeah, XF should update the SFAws package.To continue supporting S3 in the future, we will need to eventually have this plugin updated to utilizeListObjectsV2. As noted on Cloudflare R2's docs, they have no intention of adding deprecated functions.

However I am quite confident that the issue lies for us in just one file in one line.

If you change

Code:

src/addons/XFAws/_vendor/league/flysystem-aws-s3-v3/src/AwsS3Adapter.phplistObjects to listObjectsV2 - it will work just fine. This is called in a method to test if a directory exists and returns results that will be the same regardless of whichever command you use.Disclaimer - Of course whenever you edit code in place, you have to really understand what you are doing, this is not something that your average XF licensee should action and is outside of any support agreement or warranty that XF issue. use only on non-prod systems

and on line 702, changelistObjectstolistObjectsV2- it will work just fine. This is called in a method to test if a directory exists and returns results that will be the same regardless of whichever command you use.

Disclaimer - Of course whenever you edit code in place, you have to really understand what you are doing, this is not something that your average XF licensee should action and is outside of any support agreement or warranty that XF issue. use only on non-prod systems

I already went down this rabbit hole

Tried this because the documentation of the AWS callbacks show that it SHOULD be interchangeable but it is not; When running the command after we get the following response:

Code:

Aws\S3\Exception\S3Exception: Error executing "ListObjectsV2" on "https://{..}.r2.cloudflarestorage.com/?list-type=2&prefix={..}%2Fattachments%2F234%2F234842-1682772c8adaf7dd6149be543447c891.jpg%2F&max-keys=1"; AWS HTTP error: Server error: `GET https://{..}.r2.cloudflarestorage.com/?list-type=2&prefix={..}%2Fattachments%2F234%2F234842-1682772c8adaf7dd6149be543447c891.jpg%2F&max-keys=1` resulted in a `501 Not Implemented` response: <Error><Code>NotImplemented</Code><Message>ListBuckets search parameter list-type not implemented</Message></Error> NotImplemented (server): ListBuckets search parameter list-type not implemented - <Error><Code>NotImplemented</Code><Message>ListBuckets search parameter list-type not implemented</Message>Which is interesting because according to this error the

list-type is not supported, however CF's R2 Docs show that it is supported; Thus something somewhere still isn't "just right" for this to work with a simple swap.I have a feeling this could be related to the url that is being attached to the

prefix including the bucket name, when the bucket is defined in the connection block.This isn't something that XenForo should fix

I disagree - The addon is offered as an extension of their platform and it's officially released under "XenForo".

My request is more than fair considering that it will pose future problems for others wanting to use a S3 Compatible Endpoint that is modern and not implementing legacy/deprecated functions. From my perspective, if this is an issue and they are using a third party tool set like PHP Leagues FlySystem they should be flagging an issue up on the GitHub - I am doing my part by reporting it here as the addon is officially released and supported by the XenForo Team.

Last edited:

This isn't strictly true, they are different for a reason.Tried this because the documentation of the AWS callbacks show that it SHOULD be interchangeable but it is not

list-types is valid only on listObjectsV2, the fact that it doesn't recognise it suggests that R2 isn't quite right - as that is the thing that really differentiates the two APIs see https://docs.aws.amazon.com/AmazonS3/latest/API/API_ListObjects.html and https://docs.aws.amazon.com/AmazonS3/latest/API/API_ListObjectsV2.html. I'd be tempted to test this out independently of XF and the league library. Should be able to test using something like aws s3api list-objects --bucket [Your Bucket] --output yaml --endpoint-url https://[Your Bucket].r2.cloudflarestorage.comand

aws s3api list-objects-v2 --bucket [Your Bucket] --output yaml --endpoint-url https://[Your Bucket].r2.cloudflarestorage.comand then maybe add the prefix

--prefix {..}/attachments/234/234842-1682772c8adaf7dd6149be543447c891.jpg/ to the commands as well to see if that makes a diffI haven't got any R2 setup, not about to start either, but interested in how you get on.

If I am to be honest - I reckon the log error message itself may be wrong that some other issue, maybe a prefix is tripping it up.

Interested to hear how you get on

This isn't strictly true, they are different for a reason.list-typesis valid only on listObjectsV2, the fact that it doesn't recognise it suggests that R2 isn't quite right - as that is the thing that really differentiates the two APIs see https://docs.aws.amazon.com/AmazonS3/latest/API/API_ListObjects.html and https://docs.aws.amazon.com/AmazonS3/latest/API/API_ListObjectsV2.html. I'd be tempted to test this out independently of XF and the league library. Should be able to test using something like

aws s3api list-objects --bucket [Your Bucket] --output yaml --endpoint-url https://[Your Bucket].r2.cloudflarestorage.com

and

aws s3api list-objects-v2 --bucket [Your Bucket] --output yaml --endpoint-url https://[Your Bucket].r2.cloudflarestorage.com

and then maybe add the prefix--prefix {..}/attachments/234/234842-1682772c8adaf7dd6149be543447c891.jpg/to the commands as well to see if that makes a diff

I haven't got any R2 setup, not about to start either, but interested in how you get on.

If I am to be honest - I reckon the log error message itself may be wrong that some other issue, maybe a prefix is tripping it up.

Interested to hear how you get on

I am able to work with R2 via aws s3api; list-objects does not work, where list-objects-v2 does output.

Just curious @VersoBit, have you been able to use R2 with XenForo successfully?

Partially... We were able to move our data to the R2 bucket, we have a worker to deploy the

external files on our cdn.*.com domain. However, the S3 implementation here does not work for Cloudflare R2, therefore no one can upload content to R2 from XenForo, thus defeating the purpose of using S3/R2.

PHP:

$s3 = function(){

return new \Aws\S3\S3MultiRegionClient([

'credentials' => [

'key' => '<key>',

'secret' => '<secret>'

],

'version' => 'latest',

'endpoint' => 'https://r2.cloudflarestorage.com'

]);

};

$config['fsAdapters']['data'] = function() use($s3)

{

return new \League\Flysystem\AwsS3v3\AwsS3Adapter($s3(), '<r2-private-endpoint-token>', '<bucket>');

};MultiRegionClient is used here because R2 is a multi-region service (by definition), and is interchangeable with the only difference being removal of a defined region. It is worth noting, the same results are observed with either client type for S3.

..interesting, ill have to dig in further I guess.Well I dont know what you have done - just did a bit of testing and list-objects is honoured by R2, as is list-objects-v2 so need to update the AWS SDK. I take it the endpoint above has been modified

Getting R2 to work has been a bit trivial, but I got it going now...

First, I was unable to get the PortableVisiblityConverter working (I tried patching in the files from the repos); so I had to modify

While attempting to get things working, we also encountered issues with PHP 8.1.* that means if you intend to use R2 and are running a modern version of PHP - you might need to make the modification as reported in this bug report.

Beyond that - follow the guide as you would, for added optimization + performance:

Offloaded requests on cdn.* (external data - the stuff everyone can access)

Offloaded Internal Data (X-Accel + Cache) - Was hoping to show how the cache reduces hits on the endpoint but the chart size makes it hard to!

Our Origin Servers are hosted with Linode which allows us to access R2 at a very low latency.

An example of a worker to use with R2 + CF cache would be: https://developers.cloudflare.com/r2/examples/cache-api/

First, I was unable to get the PortableVisiblityConverter working (I tried patching in the files from the repos); so I had to modify

AwsS3Adapter.php to change any instance of public-read to only return private - This is because R2 in nature is a private bucket and cannot be made visible:

PHP:

<?php

namespace League\Flysystem\AwsS3v3;

use Aws\Result;

use Aws\S3\Exception\DeleteMultipleObjectsException;

use Aws\S3\Exception\S3Exception;

use Aws\S3\Exception\S3MultipartUploadException;

use Aws\S3\S3Client;

use Aws\S3\S3ClientInterface;

use League\Flysystem\AdapterInterface;

use League\Flysystem\Adapter\AbstractAdapter;

use League\Flysystem\Adapter\CanOverwriteFiles;

use League\Flysystem\Config;

use League\Flysystem\Util;

class AwsS3Adapter extends AbstractAdapter implements CanOverwriteFiles

{

const PUBLIC_GRANT_URI = 'http://acs.amazonaws.com/groups/global/AllUsers';

/**

* @var array

*/

protected static $resultMap = [

'Body' => 'contents',

'ContentLength' => 'size',

'ContentType' => 'mimetype',

'Size' => 'size',

'Metadata' => 'metadata',

'StorageClass' => 'storageclass',

'ETag' => 'etag',

'VersionId' => 'versionid'

];

/**

* @var array

*/

protected static $metaOptions = [

'ACL',

'CacheControl',

'ContentDisposition',

'ContentEncoding',

'ContentLength',

'ContentMD5',

'ContentType',

'Expires',

'GrantFullControl',

'GrantRead',

'GrantReadACP',

'GrantWriteACP',

'Metadata',

'RequestPayer',

'SSECustomerAlgorithm',

'SSECustomerKey',

'SSECustomerKeyMD5',

'SSEKMSKeyId',

'ServerSideEncryption',

'StorageClass',

'Tagging',

'WebsiteRedirectLocation',

];

/**

* @var S3ClientInterface

*/

protected $s3Client;

/**

* @var string

*/

protected $bucket;

/**

* @var array

*/

protected $options = [];

/**

* @var bool

*/

private $streamReads;

public function __construct(S3ClientInterface $client, $bucket, $prefix = '', array $options = [], $streamReads = true)

{

$this->s3Client = $client;

$this->bucket = $bucket;

$this->setPathPrefix($prefix);

$this->options = $options;

$this->streamReads = $streamReads;

}

/**

* Get the S3Client bucket.

*

* @return string

*/

public function getBucket()

{

return $this->bucket;

}

/**

* Set the S3Client bucket.

*

* @return string

*/

public function setBucket($bucket)

{

$this->bucket = $bucket;

}

/**

* Get the S3Client instance.

*

* @return S3ClientInterface

*/

public function getClient()

{

return $this->s3Client;

}

/**

* Write a new file.

*

* @param string $path

* @param string $contents

* @param Config $config Config object

*

* @return false|array false on failure file meta data on success

*/

public function write($path, $contents, Config $config)

{

return $this->upload($path, $contents, $config);

}

/**

* Update a file.

*

* @param string $path

* @param string $contents

* @param Config $config Config object

*

* @return false|array false on failure file meta data on success

*/

public function update($path, $contents, Config $config)

{

return $this->upload($path, $contents, $config);

}

/**

* Rename a file.

*

* @param string $path

* @param string $newpath

*

* @return bool

*/

public function rename($path, $newpath)

{

if ( ! $this->copy($path, $newpath)) {

return false;

}

return $this->delete($path);

}

/**

* Delete a file.

*

* @param string $path

*

* @return bool

*/

public function delete($path)

{

$location = $this->applyPathPrefix($path);

$command = $this->s3Client->getCommand(

'deleteObject',

[

'Bucket' => $this->bucket,

'Key' => $location,

]

);

$this->s3Client->execute($command);

return ! $this->has($path);

}

/**

* Delete a directory.

*

* @param string $dirname

*

* @return bool

*/

public function deleteDir($dirname)

{

try {

$prefix = $this->applyPathPrefix($dirname) . '/';

$this->s3Client->deleteMatchingObjects($this->bucket, $prefix);

} catch (DeleteMultipleObjectsException $exception) {

return false;

}

return true;

}

/**

* Create a directory.

*

* @param string $dirname directory name

* @param Config $config

*

* @return bool|array

*/

public function createDir($dirname, Config $config)

{

return $this->upload($dirname . '/', '', $config);

}

/**

* Check whether a file exists.

*

* @param string $path

*

* @return bool

*/

public function has($path)

{

$location = $this->applyPathPrefix($path);

if ($this->s3Client->doesObjectExist($this->bucket, $location, $this->options)) {

return true;

}

return $this->doesDirectoryExist($location);

}

/**

* Read a file.

*

* @param string $path

*

* @return false|array

*/

public function read($path)

{

$response = $this->readObject($path);

if ($response !== false) {

$response['contents'] = $response['contents']->getContents();

}

return $response;

}

/**

* List contents of a directory.

*

* @param string $directory

* @param bool $recursive

*

* @return array

*/

public function listContents($directory = '', $recursive = false)

{

$prefix = $this->applyPathPrefix(rtrim($directory, '/') . '/');

$options = ['Bucket' => $this->bucket, 'Prefix' => ltrim($prefix, '/')];

if ($recursive === false) {

$options['Delimiter'] = '/';

}

$listing = $this->retrievePaginatedListing($options);

$normalizer = [$this, 'normalizeResponse'];

$normalized = array_map($normalizer, $listing);

return Util::emulateDirectories($normalized);

}

/**

* @param array $options

*

* @return array

*/

protected function retrievePaginatedListing(array $options)

{

$resultPaginator = $this->s3Client->getPaginator('ListObjects', $options);

$listing = [];

foreach ($resultPaginator as $result) {

$listing = array_merge($listing, $result->get('Contents') ?: [], $result->get('CommonPrefixes') ?: []);

}

return $listing;

}

/**

* Get all the meta data of a file or directory.

*

* @param string $path

*

* @return false|array

*/

public function getMetadata($path)

{

$command = $this->s3Client->getCommand(

'headObject',

[

'Bucket' => $this->bucket,

'Key' => $this->applyPathPrefix($path),

] + $this->options

);

/* @var Result $result */

try {

$result = $this->s3Client->execute($command);

} catch (S3Exception $exception) {

if ($this->is404Exception($exception)) {

return false;

}

throw $exception;

}

return $this->normalizeResponse($result->toArray(), $path);

}

/**

* @return bool

*/

private function is404Exception(S3Exception $exception)

{

$response = $exception->getResponse();

if ($response !== null && $response->getStatusCode() === 404) {

return true;

}

return false;

}

/**

* Get all the meta data of a file or directory.

*

* @param string $path

*

* @return false|array

*/

public function getSize($path)

{

return $this->getMetadata($path);

}

/**

* Get the mimetype of a file.

*

* @param string $path

*

* @return false|array

*/

public function getMimetype($path)

{

return $this->getMetadata($path);

}

/**

* Get the timestamp of a file.

*

* @param string $path

*

* @return false|array

*/

public function getTimestamp($path)

{

return $this->getMetadata($path);

}

/**

* Write a new file using a stream.

*

* @param string $path

* @param resource $resource

* @param Config $config Config object

*

* @return array|false false on failure file meta data on success

*/

public function writeStream($path, $resource, Config $config)

{

return $this->upload($path, $resource, $config);

}

/**

* Update a file using a stream.

*

* @param string $path

* @param resource $resource

* @param Config $config Config object

*

* @return array|false false on failure file meta data on success

*/

public function updateStream($path, $resource, Config $config)

{

return $this->upload($path, $resource, $config);

}

/**

* Copy a file.

*

* @param string $path

* @param string $newpath

*

* @return bool

*/

public function copy($path, $newpath)

{

try {

$this->s3Client->copy(

$this->bucket,

$this->applyPathPrefix($path),

$this->bucket,

$this->applyPathPrefix($newpath),

$this->getRawVisibility($path) === 'private',

$this->options

);

} catch (S3Exception $e) {

return false;

}

return true;

}

/**

* Read a file as a stream.

*

* @param string $path

*

* @return array|false

*/

public function readStream($path)

{

$response = $this->readObject($path);

if ($response !== false) {

$response['stream'] = $response['contents']->detach();

unset($response['contents']);

}

return $response;

}

/**

* Read an object and normalize the response.

*

* @param string $path

*

* @return array|bool

*/

protected function readObject($path)

{

$options = [

'Bucket' => $this->bucket,

'Key' => $this->applyPathPrefix($path),

] + $this->options;

if ($this->streamReads && ! isset($options['@http']['stream'])) {

$options['@http']['stream'] = true;

}

$command = $this->s3Client->getCommand('getObject', $options + $this->options);

try {

/** @var Result $response */

$response = $this->s3Client->execute($command);

} catch (S3Exception $e) {

return false;

}

return $this->normalizeResponse($response->toArray(), $path);

}

/**

* Set the visibility for a file.

*

* @param string $path

* @param string $visibility

*

* @return array|false file meta data

*/

public function setVisibility($path, $visibility)

{

$command = $this->s3Client->getCommand(

'putObjectAcl',

[

'Bucket' => $this->bucket,

'Key' => $this->applyPathPrefix($path),

'ACL' => 'private',

]

);

try {

$this->s3Client->execute($command);

} catch (S3Exception $exception) {

return false;

}

return compact('path', 'visibility');

}

/**

* Get the visibility of a file.

*

* @param string $path

*

* @return array|false

*/

public function getVisibility($path)

{

return ['visibility' => $this->getRawVisibility($path)];

}

/**

* {@inheritdoc}

*/

public function applyPathPrefix($path)

{

return ltrim(parent::applyPathPrefix($path), '/');

}

/**

* {@inheritdoc}

*/

public function setPathPrefix($prefix)

{

$prefix = ltrim((string) $prefix, '/');

return parent::setPathPrefix($prefix);

}

/**

* Get the object acl presented as a visibility.

*

* @param string $path

*

* @return string

*/

protected function getRawVisibility($path)

{

$command = $this->s3Client->getCommand(

'getObjectAcl',

[

'Bucket' => $this->bucket,

'Key' => $this->applyPathPrefix($path),

]

);

$result = $this->s3Client->execute($command);

$visibility = AdapterInterface::VISIBILITY_PRIVATE;

foreach ($result->get('Grants') as $grant) {

if (

isset($grant['Grantee']['URI'])

&& $grant['Grantee']['URI'] === self::PUBLIC_GRANT_URI

&& $grant['Permission'] === 'READ'

) {

$visibility = AdapterInterface::VISIBILITY_PUBLIC;

break;

}

}

return $visibility;

}

/**

* Upload an object.

*

* @param string $path

* @param string|resource $body

* @param Config $config

*

* @return array|bool

*/

protected function upload($path, $body, Config $config)

{

$key = $this->applyPathPrefix($path);

$options = $this->getOptionsFromConfig($config);

$acl = array_key_exists('ACL', $options) ? $options['ACL'] : 'private';

if (!$this->isOnlyDir($path)) {

if ( ! isset($options['ContentType'])) {

$options['ContentType'] = Util::guessMimeType($path, $body);

}

if ( ! isset($options['ContentLength'])) {

$options['ContentLength'] = is_resource($body) ? Util::getStreamSize($body) : Util::contentSize($body);

}

if ($options['ContentLength'] === null) {

unset($options['ContentLength']);

}

}

try {

$this->s3Client->upload($this->bucket, $key, $body, $acl, ['params' => $options]);

} catch (S3MultipartUploadException $multipartUploadException) {

return false;

}

return $this->normalizeResponse($options, $path);

}

/**

* Check if the path contains only directories

*

* @param string $path

*

* @return bool

*/

private function isOnlyDir($path)

{

return substr($path, -1) === '/';

}

/**

* Get options from the config.

*

* @param Config $config

*

* @return array

*/

protected function getOptionsFromConfig(Config $config)

{

$options = $this->options;

if ($visibility = $config->get('visibility')) {

// For local reference

$options['visibility'] = $visibility;

// For external reference

$options['ACL'] = 'private';

}

if ($mimetype = $config->get('mimetype')) {

// For local reference

$options['mimetype'] = $mimetype;

// For external reference

$options['ContentType'] = $mimetype;

}

foreach (static::$metaOptions as $option) {

if ( ! $config->has($option)) {

continue;

}

$options[$option] = $config->get($option);

}

return $options;

}

/**

* Normalize the object result array.

*

* @param array $response

* @param string $path

*

* @return array

*/

protected function normalizeResponse(array $response, $path = null)

{

$result = [

'path' => $path ?: $this->removePathPrefix(

isset($response['Key']) ? $response['Key'] : $response['Prefix']

),

];

$result = array_merge($result, Util::pathinfo($result['path']));

if (isset($response['LastModified'])) {

$result['timestamp'] = strtotime($response['LastModified']);

}

if ($this->isOnlyDir($result['path'])) {

$result['type'] = 'dir';

$result['path'] = rtrim($result['path'], '/');

return $result;

}

return array_merge($result, Util::map($response, static::$resultMap), ['type' => 'file']);

}

/**

* @param string $location

*

* @return bool

*/

protected function doesDirectoryExist($location)

{

// Maybe this isn't an actual key, but a prefix.

// Do a prefix listing of objects to determine.

$command = $this->s3Client->getCommand(

'listObjects',

[

'Bucket' => $this->bucket,

'Prefix' => rtrim($location, '/') . '/',

'MaxKeys' => 1,

]

);

try {

$result = $this->s3Client->execute($command);

return $result['Contents'] || $result['CommonPrefixes'];

} catch (S3Exception $e) {

if (in_array($e->getStatusCode(), [403, 404], true)) {

return false;

}

throw $e;

}

}

}While attempting to get things working, we also encountered issues with PHP 8.1.* that means if you intend to use R2 and are running a modern version of PHP - you might need to make the modification as reported in this bug report.

Beyond that - follow the guide as you would, for added optimization + performance:

- Use Attachment Approvements by @Xon and enable X-Accel.

- Create a Cloudflare Worker for your internal resources.

- Map it to an endpoint on your domain or use the workers.dev endpoint.

- Restrict access to your origin only (via rules and in the worker script)

- Use NGINX to have a hot-cache on your system for serving the internal data.

- Create a Cloudflare Worker for your internal resources.

- Create a Cloudflare Worker for your external resources. (offloads traffic from origin and puts it on the edge).

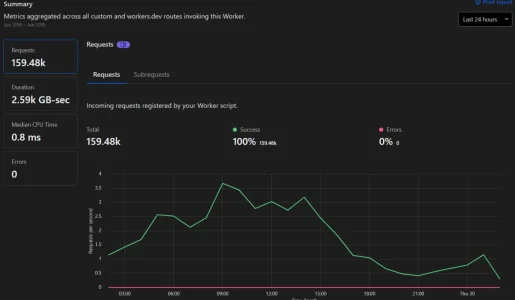

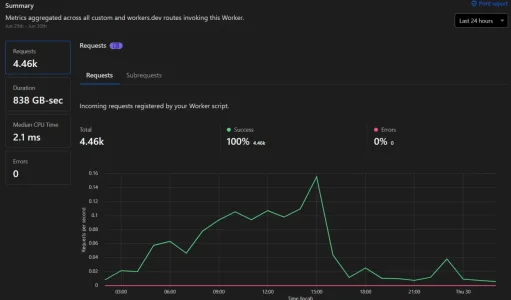

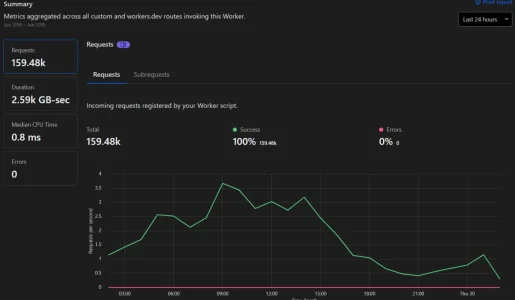

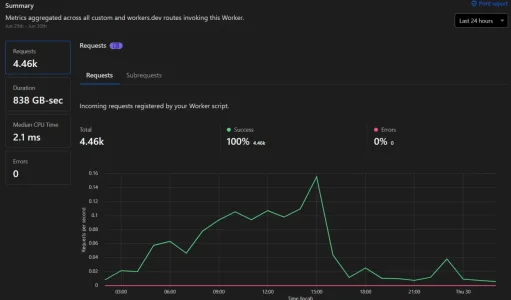

Offloaded requests on cdn.* (external data - the stuff everyone can access)

Offloaded Internal Data (X-Accel + Cache) - Was hoping to show how the cache reduces hits on the endpoint but the chart size makes it hard to!

Our Origin Servers are hosted with Linode which allows us to access R2 at a very low latency.

An example of a worker to use with R2 + CF cache would be: https://developers.cloudflare.com/r2/examples/cache-api/

I hate asking this while you fellas are doing real tech trouble shooting, but I've been trying to follow this thread, as an old dev, certainly not a server guy, I have to admit I don't get the specifics with cloud storage. I Just had to bump my VPS up a package because I was out of space, I'd love to offload some storage & my backups.

Cloudflare reports:

Total Requests = 28.41M

Percent Cached = 68.36%

Total Data Served = 5 TB

Data Cached = 4 TB

My /internal_data/attachments is about 150GB

I'm curious if anybody has similar stats and what their monthly storage costs are.

It seems the public / private forum storage concern may be fixed by private/public buckets?

Has anybody got external storage working with one the bandwidth alliance members?

Cloudflare reports:

Total Requests = 28.41M

Percent Cached = 68.36%

Total Data Served = 5 TB

Data Cached = 4 TB

My /internal_data/attachments is about 150GB

I'm curious if anybody has similar stats and what their monthly storage costs are.

It seems the public / private forum storage concern may be fixed by private/public buckets?

Has anybody got external storage working with one the bandwidth alliance members?

XenForo updated Using DigitalOcean Spaces or Amazon S3 for file storage in XF 2.1+ with a new update entry:

Minor change: Smaller add-on download

Read the rest of this update entry...

Minor change: Smaller add-on download

This update is a very minor maintenance release.

As well as including the latest version of the Amazon AWS SDK (3.231.7) this is now a much smaller subset of the behemoth that is the full SDK that only includes the necessary files to access Amazon S3 services.

There is no real need to install this version if everything is working as expected.

Note

Simply upgrading the add-on will leave remnants of the full-size Amazon AWS SDK on your file system. If you wish to avoid...

Read the rest of this update entry...

Yeah for sure, R2 would be your best bet. The problem for you with S3 is that unless you are hosting your site on AWS, you will end up paying a lot for egress traffic as your internal data wont be cached between storage and web servers.It seems the public / private forum storage concern may be fixed by private/public buckets?

R2 has been extremely performant for both internal and external data; using attachment improvements we've setup a nginx cache on the private worker url we hit for x-accel requests.

Downside is the modifications we needed to do to get it going.... (noted in previous post)

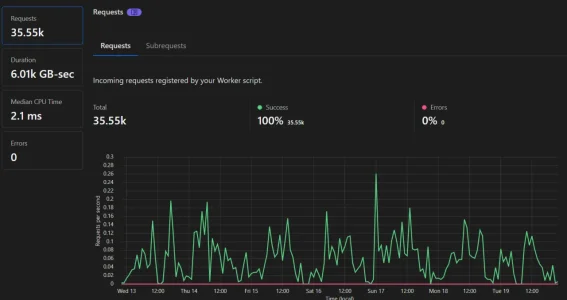

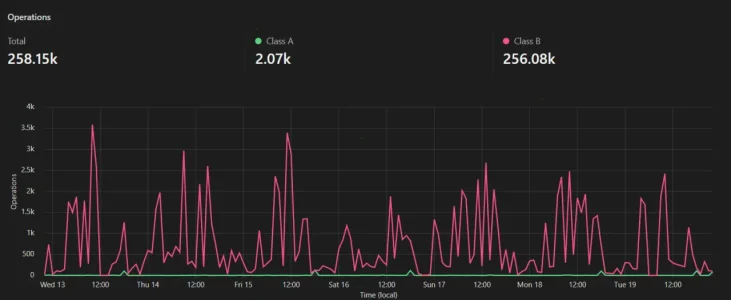

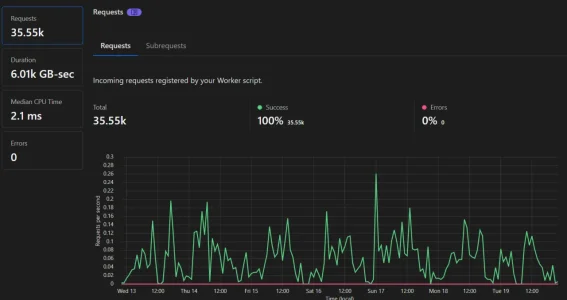

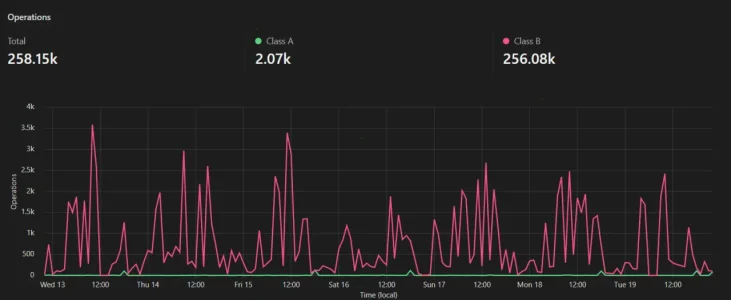

Wanted to provide some updated results with longer charts for everyone looking to use R2:

external data worker

external data bucket

internal data worker

internal data bucket

We have not noticed any performance issues either when users access content since we've swapped.

Downside is the modifications we needed to do to get it going.... (noted in previous post)

Wanted to provide some updated results with longer charts for everyone looking to use R2:

external data worker

external data bucket

internal data worker

internal data bucket

We have not noticed any performance issues either when users access content since we've swapped.

Last edited:

is that your 24 hour stats? or other time block?I hate asking this while you fellas are doing real tech trouble shooting, but I've been trying to follow this thread, as an old dev, certainly not a server guy, I have to admit I don't get the specifics with cloud storage. I Just had to bump my VPS up a package because I was out of space, I'd love to offload some storage & my backups.

Cloudflare reports:

Total Requests = 28.41M

Percent Cached = 68.36%

Total Data Served = 5 TB

Data Cached = 4 TB

My /internal_data/attachments is about 150GB

I'm curious if anybody has similar stats and what their monthly storage costs are.

It seems the public / private forum storage concern may be fixed by private/public buckets?

Has anybody got external storage working with one the bandwidth alliance members?

here's what i wrote up before about the migration:

Moving UK host to UK shared or VPS - Suggestions ?

We're currently based on a VPS in the UK. We've been given potential notice to leave because they want everyone using there own in-house management software rather than plesk or cpanel. While this isn't a major issue in itself, it does force our hand a bit because it's just so expensive...

the big piece-- optimize your attachments/images first. you can probably shrink that by half or more.

Also, i use Vultr, so i am in the partner Bandwidth Alliance program with my host.

Last edited:

TPerry

Well-known member

ahem... if they suspend your account (specific to the cloud related stuff) you are screwed anyway. That's why you MUST select a hosting platform that can meet your requirements. If they say drug related stuff is taboo... then you probably don't want to host with them.And what about if digitalocean suspends your account? then your forum is ruined

I run a fully legal cannabis website and amazon s3 suspended my account (good job i was only using it for testing purposes)