A bit of a field report: When I looked into the dashboard of IP Thread Monitor routinely this morning something was unusual. Over the last couple of days it had been quiet on the scraping front and the massive amount of blocked countries and ASNs did their part to let my server live an easy life. However, tonight things changed a bit:

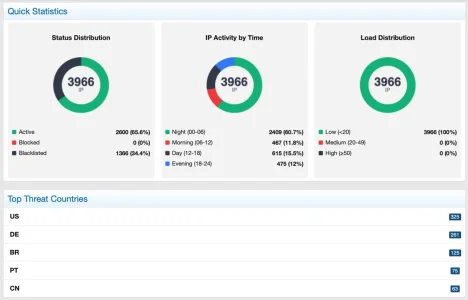

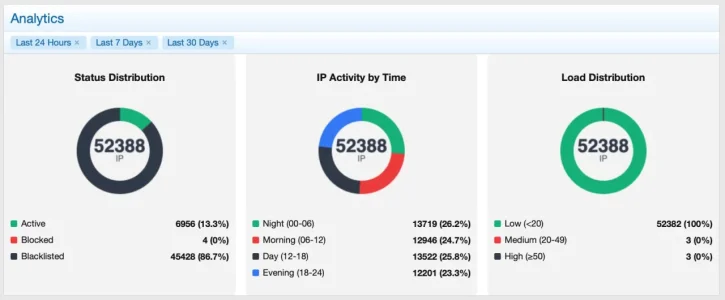

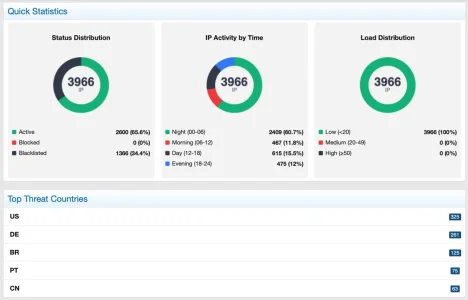

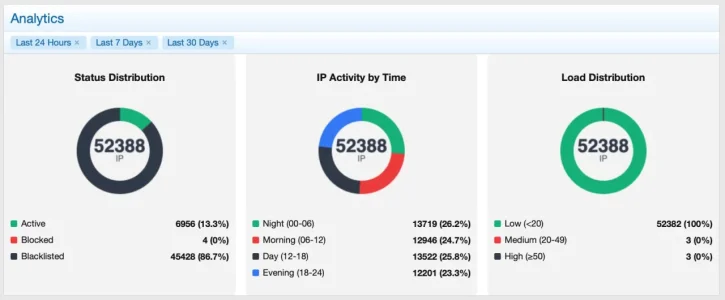

The number of IPs visiting in 24h had again gone up - lately it had been around 1.200, at max maybe 2.000. Typically around 700 get through, the rest is blacklisted. Over the last 30 days the statistics look like this: A deflection rate of more than 80%.

However, now I saw myself confronted with a 34% rate and 2.400 visits between midnight and six in the morning when only very few genuine visitors come to my forum. There are some, but few.

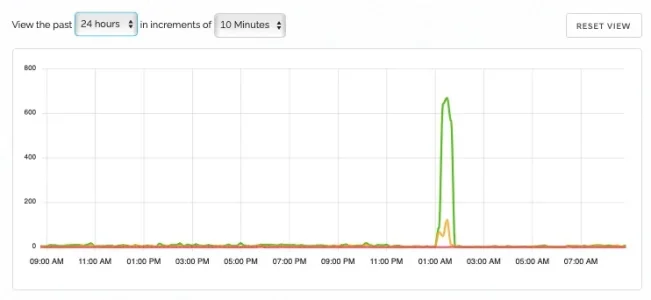

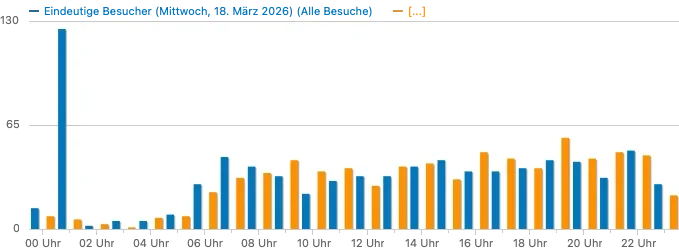

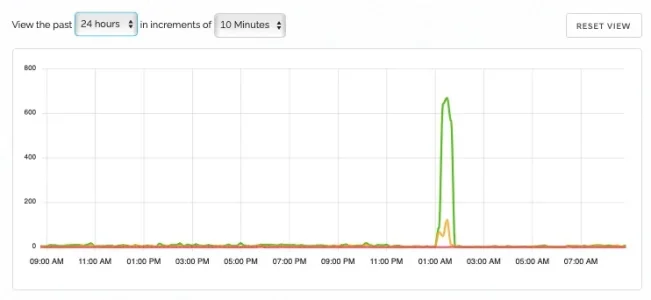

A check at the proxycheck.io dashboard showed a massive (for my environment) peak of different IPs showing up between one and two o'clock in the morning:

Clearly not normal traffic. Yet proxycheck.io had only identified a fraction of them as bad and even my country- and ASN blocking had let them through as well. Strange. No peak with registered visitors, so it had to be guests. No peak in the matomo statistics, so these visitors did not trigger the tracking. Time to dive into the rabbit hole.

I sshed into the server and went to analyze the web.log

Code:

$ grep "18/Mar/2026:01:" web.log | grep " 200" | wc -l

gave me 5662 entries which resulted in a code 200 between 1 and 2 in the morning. Not good. The hour before it was 1631 (and there is the rest of the genuine users before going to bed included), the hour after gave me 287. So clearly, I got the time window.

Let's break it further down and so I did:

1:00 - 1:10 280

1:10 - 1:20 1751

1:20 - 1:30 1767

1:30 - 1:40 1630

1:40 - 1:50 39

1:50 - 2:00 195

So a time window of 30 minutes with way higher traffic than usual. In a bigger forum or one with an international audience, that is distributed though time zones probably no one would have noticed. Again the advantage of running a small local forum in laboratory mode.

My 5562 entries came from 1373 different IP addresses. 1156 of them had just one single entry in the log file, so basically did one single call and another 70 had two entries - clearly not your genuine visitors.

I could already see from the hostnames in the log that most of them came from German DSL providers for private users - clearly resident proxies. Finally they got me: While I do successfully block resident proxies from a lot of countries by country or ASN blocking b/c I don't have regular visitors from there I cannot do that within Germany, as my core audience comes from there.

Havin in mind the claims of providers of resident proxy networks about millions of resident proxies within Germany I was curious which providers they were coming from. The admin's Swiss army knife, the combo of grep, awk, dig, netcat and a little shell scripting let me feed the IPs into the fabulous free service of

team cymru to get the ASNs for the IPs in question and aggregate them. Turned out: Not too many surprises: Almost all of the requesting IPs were from private DSL connections while their respective owners enjoyed their sleep. The ASNs sorted by number of different requesting IPs during the timeframe:

355: AS3209 (Vodafone)

232: AS3320 (DeTAG Deutsche Telekom)

226: AS8881 (Versatel)

173: AS6805 (Telefonica Germany)

132: AS3133 (Kabel-Deutschland)

94: AS7922 (COMCAST) - an outlier from the US, traditionally called Spamcast since more than 20 years

92: AS60294 (DE-DGW Deutsche Glasfaser Wholesale)

41: AS46375 (Sonic Telecom LLC, US)

39: AS42184 (TKRZ Stadtwerke GmbH) - a small local provider that I never heard of before

27: AS202208 (teutel GmbH) - another small local provider

22: AS8374 (Plusnet) from Poland

14: AS207790 (SWN Stadtwerke Neumuenster GmbH) - another small local provider

There were a couple below ten IPs as well and these were small ISPs. The order list pretty much reflects market share within German DSL/Cable providers.

So the bad news is: There are indeed resident proxies in Germany and there are many. And I do currently have no tool to keep them out from my forum. Time to get creative.

The good news is: As I have limited guest viewing massively a couple of weeks ago they can scrape a bit, but not very much.

Given that all of that came out of nothing and peaked massively through distributed requests from loads of IPs it is pretty safe to assume that this was one single player, using zombie hosts to scrape my forum. I still can barely believe that those people rented out their internet connection as zombies knowingly.