You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Crazy amount of guests

- Thread starter Levina

- Start date

-

- Tags

- bot swarms guests

What tooling did you choose to make those work?Using following blocklists:

On cpanelWhat tooling did you choose to make those work?

- countries and ASN in firewall cc_deny (csf)

- user agents in apache with SetEnvIfNoCase (headless browsers, missing user-agents, scanners, data crawlers,..)

- firehol 1 in firewall lfd blocklist (csf)

Blocking specific countries in South Amerika had most effect.

We had a large amount of guests/bots from amazonaws.com yesterday. We block some countries on server but have a lot of different ones today that normally don't show up.

Brazil also and Argentina mostly now.

Brazil also and Argentina mostly now.

This is what we have.

Code:

<IfModule mod_setenvif.c>

<Location />

# Headless / automation browsers

SetEnvIfNoCase User-Agent "(selenium|puppeteer|phantomjs|phantom|playwright(-chromium|(-webkit)|(-firefox)?)?|headlesschrome|cypress|chromiumbot|headlessbot|slimerjs|triflejs|TestCafe|Nightwatch|WebDriverIO|Taiko|RobotFramework|Protractor|Nightmare|CasperJS|ZombieJS|Splash|HtmlUnit|WebKitTestRunner)" bad_bots

# AI / content / data crawlers

SetEnvIfNoCase User-Agent...This also helps.

github.com

github.com

iplists.firehol.org

iplists.firehol.org

GitHub - firehol/blocklist-ipsets: ipsets dynamically updated with firehol's update-ipsets.sh script

ipsets dynamically updated with firehol's update-ipsets.sh script - firehol/blocklist-ipsets

FireHOL IP Lists | IP Blacklists | IP Reputation Feeds

350+ IP blacklists, IP blocklists and IP Reputation feeds, about Cybercrime, Fraud, Botnets, Μalware, Virus, Abuse, Attacks, Open Proxies, Anonymizers. See their changes and updates, size over time, retention policy, geographic coverage, comparisons and overlaps.

iplists.firehol.org

iplists.firehol.org

Last edited:

Don't get me wrong here, but AWS is excellent for CloudFront/S3 hosting of objects - global replication network and all. Everything else after that (IMHO) is big money.Interesting. Typically people realize that AWS is not a cheap option after they went to the cloud to save money.

This is a high score for Xenforo.com as of a few mins ago:

View attachment 337529

Must be bad out there

Interestingly enough, things were slow for me over the past 72 hours... granted Anubis is just humming along without incident."guests: 95,699" for me , atm.

However, I did notice something quite interesting this past weekend. I now have (what appears to be) a massive botnet performing hellacious rainbow/dictionary requests on my name servers. Once one of these drones got a DNS response with A or AAAA records, I almost instantly am getting hits on HTTPS/443 for that very subdomain. This seems to be a new form of scraper/probers. I've not a clue if this is an evolution of these AI/LLM scrapers, but it sure feels suspect.

Back when DNS reflection attacks were the 'hot chick' in terms of DDoS vector, I already had rate limits set in place. These attacks are being auto-rate limited on named/bind, and the worst offenders are being matched and blocked by ConfigServer & Firewall into IP Tables, replicated across my various servers.

One of the most recent attacks was more than 20k queries/query-attempts in a matter of mere seconds, spanning more than 1,500 unique IP addresses all querying the same things, across IPv4 and IPv6. It was instantaneous enough to cause named/bind to be unable to proactively rate-limit correctly - well over the defined slip and drop limits. With this massive increase of queries per second to happen like it did, it gave me pause.

At first, I wanted to say this was a DNS poisoning attempt, but it's far from that attack mechanism.

On cpanel

I also give a 403 to bots scanning for specific files (like wordpress).

- countries and ASN in firewall cc_deny (csf)

- user agents in apache with SetEnvIfNoCase (headless browsers, missing user-agents, scanners, data crawlers,..)

- firehol 1 in firewall lfd blocklist (csf)

Blocking specific countries in South Amerika had most effect.

Was going to say, if you're using nginx, throw error 444 back at the bad actors. For some reason, HTTP 444 completely breaks most of these poorly coded bots/scrapers (hint: you'll get request hammering from time to time). But alas, you're using Apache for your web services.

I now have (what appears to be) a massive botnet performing hellacious rainbow/dictionary requests on my name servers.

One of the most recent attacks was more than 20k queries/query-attempts in a matter of mere seconds, spanning more than 1,500 unique IP addresses all querying the same things, across IPv4 and IPv6.

Wow. Sometimes one would like to have the virtual swat team with it's black mini bus in place for the people that do these kind of things.

It sure has gotten annoying post 'AI-vibe-coding'. These things have the same style of probing/flooding attacks from decades past, but now it is much more distributed, and with some, much more bandwidth / resource intense. At least back then, these skiddies would at least rate limit their attacks and probe over time.Wow. Sometimes one would like to have the virtual swat team with it's black mini bus in place for the people that do these kind of things.

It's almost not even worth it trying to find the originating attacker/group. Some of these attacks are very likely state-sponsored events. Best course of action is to just proactively filter it.

That would be a better option. At the moment we temp ban an ip that repeatedly hits 403.Was going to say, if you're using nginx, throw error 444 back at the bad actors. For some reason, HTTP 444 completely breaks most of these poorly coded bots/scrapers (hint: you'll get request hammering from time to time). But alas, you're using Apache for your web services.

BrettC, thanks for mentioning this.

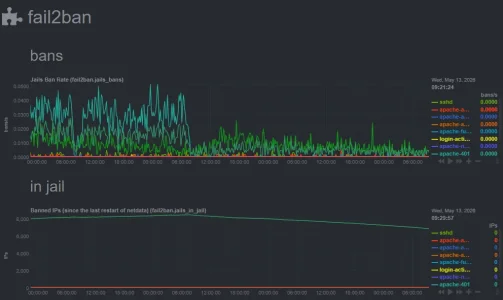

I'm not noticing an uptick in bots or CPU consumption here but i looked at my fail2ban history and notice it has been very busy rate limiting 401's.

I rate limit 404, 403, and 401, and also how fast you are submitting POSTs; these are all related to probing activity.

They must have not been too well distributed if fail2ban picked up so many of them.

I don't run my own DNS, so no signals there

I'm not noticing an uptick in bots or CPU consumption here but i looked at my fail2ban history and notice it has been very busy rate limiting 401's.

I rate limit 404, 403, and 401, and also how fast you are submitting POSTs; these are all related to probing activity.

They must have not been too well distributed if fail2ban picked up so many of them.

I don't run my own DNS, so no signals there

They hit 250k a couple weeks back. I uploaded a screenshot somewhere on the forum here.This is a high score for Xenforo.com as of a few mins ago:

View attachment 337529

Must be bad out there

Very interesting metrics!BrettC, thanks for mentioning this.

I'm not noticing an uptick in bots or CPU consumption here but i looked at my fail2ban history and notice it has been very busy rate limiting 401's.

I rate limit 404, 403, and 401, and also how fast you are submitting POSTs; these are all related to probing activity.

View attachment 337571

They must have not been too well distributed if fail2ban picked up so many of them.

I don't run my own DNS, so no signals there

As for DNS, self-hosting your own DNS and not relying on a third party provider to host your DNS 'stuff' is the most liberating thing you can do. For starters, you gain significant insight and analytical detail to queries being made to your servers.

The only real drawback is the potential exists for massive DNS flood requests and DDoS based attacks to/from port 53 as it's UDP (reflection / IP origin spoofing). Once you get a handle on that with the RRL feature set (if using named/bind), you now have near-real-time control to your entire domains records (A, AAAA, MX, etc.). You then can collect a proper heap of metrics from client ISP's resolvers accessing your services... before the actual client accesses a website under your management/control. Hosting your own name servers can show you where some of these botnets are originating. Blocking their resolvers from querying your own servers resolvers actually slams the door shut and the botnets in question end up seeing NXDOMAIN on their local clients that use said resolver. That ultimately can translate to the botnets/scraper-nets never even hitting your servers ingest point to begin with. It's not 100% by any means, as there are many clients out there that utilize Cloudflare, GoogleDNS, OpenDNS, or even run their own local resolver - ignoring the ISP resolver.

Goes back to the saying: It's always DNS.

This just shows what was said earlier though, if you have a well tuned server, none of it matters. It takes time to chase nasties around the internet, and even when you think you caught them, they change IP's / ASN's / use residential proxies. You can tune and forget, or spend time daily / weekly trying to stop it all. There is a healthy middle ground IMO, takes maybe 5 minutes weekly, but they still shift faster than you will ever block.They hit 250k a couple weeks back. I uploaded a screenshot somewhere on the forum here.

Guest caching alone at a CDN saves you 60% - 70% of your server doing anything.

here:They hit 250k a couple weeks back. I uploaded a screenshot somewhere on the forum here.

I assume XF does absolutely zero against scrapers and bots. For their business purposes it does no harm (but rather helps) if AI knows about their product.

Similar threads

- Replies

- 6

- Views

- 154

- Replies

- 8

- Views

- 198

- Question

- Replies

- 2

- Views

- 43