TheBigK

Well-known member

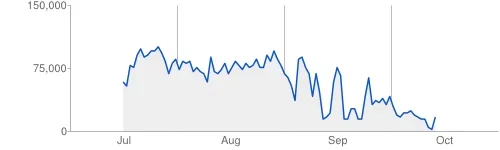

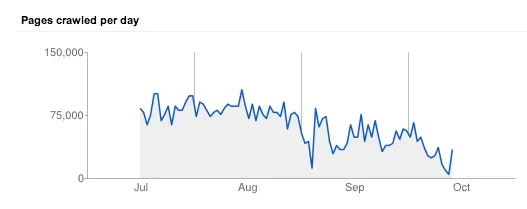

Looks like our affair with the Google Bot isn't ending. I checked Google Webmaster Tools and it's reporting -

I've absolutely no clue what's going wrong and in the last ~11 months of running Xenforo, I've never had this issue. I didn't make any change to the website that could have resulted in this issue.

Can someone inspect our site and see if anything need to be fixed?

Googlebot found an extremely high number of URLs on your site

Googlebot encountered problems while crawling your site http://www.crazyengineers.com

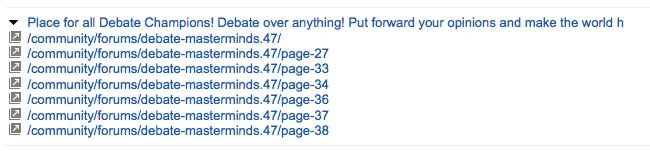

Googlebot encountered extremely large numbers of links on your site. This may indicate a problem with your site's URL structure. Googlebot may unnecessarily be crawling a large number of distinct URLs that point to identical or similar content, or crawling parts of your site that are not intended to be crawled by Googlebot. As a result Googlebot may consume much more bandwidth than necessary, or may be unable to completely index all of the content on your site.

Here's a list of sample URLs with potential problems. However, this list may not include all problematic URLs on your site.

- http://www.crazyengineers.com/community/forums/ce-infocus.52/page-204?order=view_count

- http://www.crazyengineers.com/community/find-new/3047035/threads

- http://www.crazyengineers.com/community/find-new/1251351/threads?page=6

- http://www.crazyengineers.com/community/find-new/2601881/threads?page=3

- http://www.crazyengineers.com/community/find-new/3490322/threads?page=6

- http://www.crazyengineers.com/community/find-new/3733339/threads?page=2

I've absolutely no clue what's going wrong and in the last ~11 months of running Xenforo, I've never had this issue. I didn't make any change to the website that could have resulted in this issue.

Can someone inspect our site and see if anything need to be fixed?