I wouldn't say that I've banned Linux users, but filtering outDamn, you had to ban linux users huh

If they are using real browsers, then yes, they can get around almost anything. The more people who use anubis, the higher the cpu tax will be on these bot farms and we could theoretically make the job too expensive if tons of people use something like anubis, so it's a good thing your site is making them pay the tax. I think the only way it could work is that everyone has to pay the tax, though.

We may join you in helping deliver 1 of the 1000 tiny cuts needed soon.

View attachment 334014

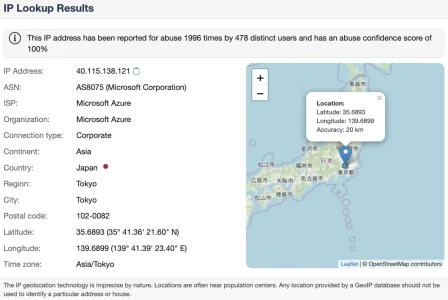

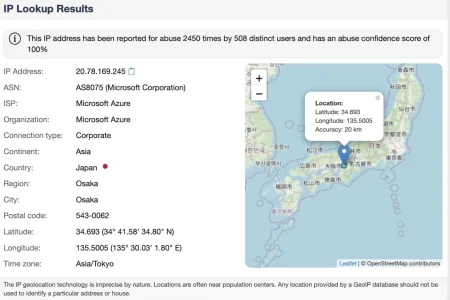

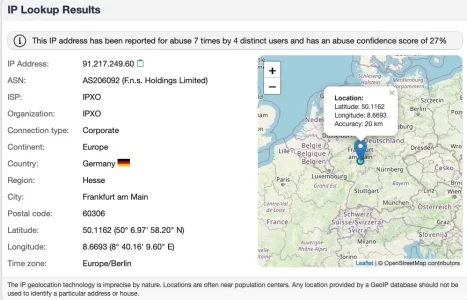

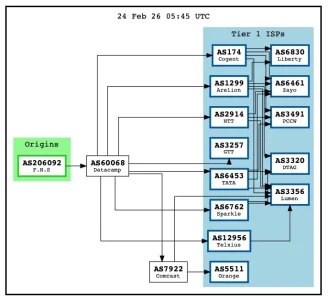

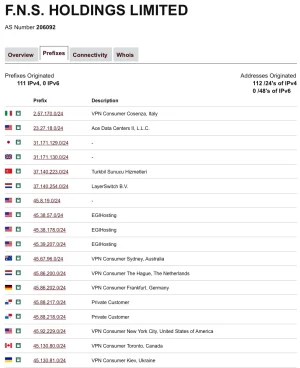

I notice they are getting more clever and more distributed too.

The number of guests on my site no longer affects volume less and frequency of rotation more.

I have yet to go in there and look for more subnets to ban. I'm thinking of writing something that automatically bans those..

HeadlessChrome UAs. That's a version of chrome that has absolutely no GUI. Which generally translates to a bot/scraper.Unfortunately, todays event wasn't a good sign to see. Primarily due to how Anubis was being solved for some clients on a challenge level of 5. Going higher in these levels tends to become problematic for legitimate users. Guess I can make much more surgical challenge levels to certain clients matching certain browser criteria. Upping challenge levels to those CIDR blocks aren't an ideal method any more when it comes to Residential Proxies getting used.

Ugh... just ugh.