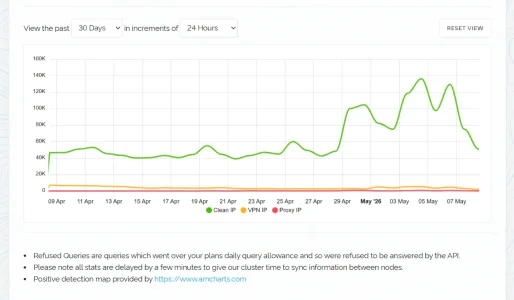

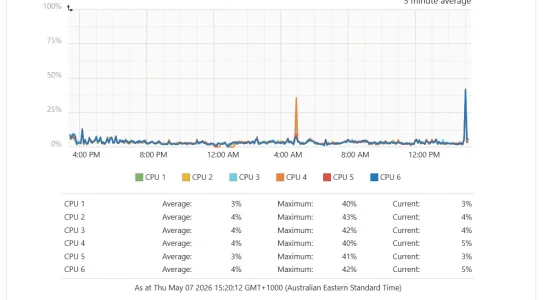

I was just hit again recently, after about 45 days of nothing. They added about 150k daily uniques onto me, which with everything locked down to CF IP's only, /search/ managed challenged (failure rate went up with their attempts), and XTR Threat Monitor, they gave up after 8 days. That used to be a million+ when unprotected, which can't get through any longer. All I had to do was upgrade proxycheck.io account during the attack to ensure I had the API calls to them, and that was it. XTR Threat Monitor completely covered the residential proxy attack, banning close to all the additional daily attempted traffic from either VPN's or Proxies. Either way, the load on the server had zero change, their software got the message after error page on error page to just quit and move along

I just don't think this is that difficult to stop. Not saying it won't get harder in the future, but right now, my minimal unobtrusive setup allowing the world access (a handful of worst ASN's that are all servers, not ISP's, managed challenged) works against this. Not a single country blocked or managed challenged. I am not a server tech, I use AI to help guide me through logical steps of identifying the issue and then stopping it in a way to not screw real people or legit bots around.

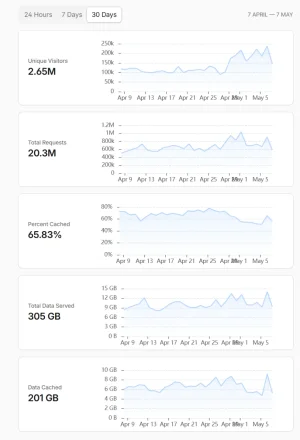

Just pages back in this discussion I was getting 800k / 1M daily uniques, below I have just over 100k daily now (real traffic), 85% of traffic was garbage. I listened to the advice given here about locking down the server to CF, did that, that fixed a large problem, thank you, but the biggest was /search/ and combined with proxycheck.io, they're just nailing their accuracy for blocking residential proxies. /search/ was the majority of load on my server that I was fighting.

(http.request.uri.path contains "/search/" and not http.cookie contains "xf_user=") on a managed challenge is priceless. That removed this massive load of one action having to search, keyword refinement, sorting and ranking results and worse if using a DB vs ES, all before the page can be built and returned. Whilst I use ES, which has already mapped every word to a document and surpasses DB lookup, still a load when compounded per search query by bots attacking it.

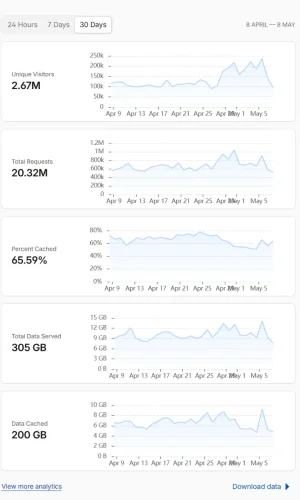

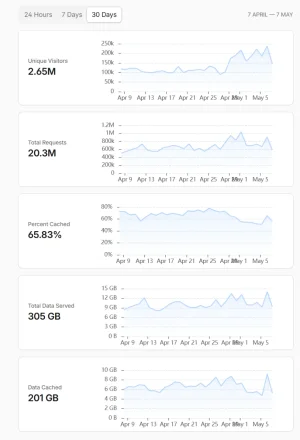

Oh... I also fixed my caching in CF, taking it from about 35% to now 60%+ average.

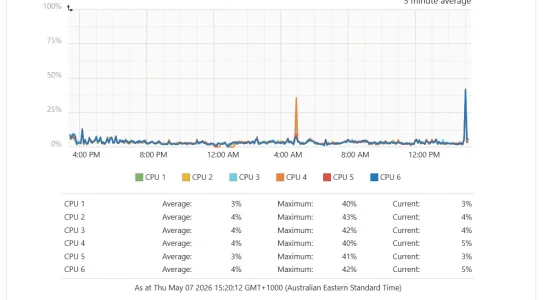

My server is otherwise open to the entire world beyond these minimal measures, so no ISP user is getting stuffed around accessing the site, no cranky users. Pages back I started in a bad place, nearly two months on, server is tight against this problem with no real concern to worry about anything as the server hums along nicely and doesn't cause issues to any site or user on it. Backups are the biggest spike typically in any given day.

My proxycheck account was running in the business plans at one point, which is when I had had enough, and ChatGPT and Claude were engaged to sort the issue out, without affecting users. I could give them a log file or snapshot to assess issues in seconds, and they did just that.

This most recent attack attempt, confirmed actions taken are correct. I was wondering how long they would keep at it, and todays result is back to 100k uniques (not shown below). 8 days and their software gave up / someone looked at a flagged issue and cancelled my site, moved on. This is the confirmation I needed that my server is now in a good place, my users aren't being affected by all this AI scrapers and other nonsense.

Oh, in CF I have every AI bot on allow, I am not blocking any legitimate bot, they just don't access it much.

My point is: This really is a fixable issue. This thread helped me fix my server. I don't have to daily manage ASN's or CIDR's.

I just don't think this is that difficult to stop. Not saying it won't get harder in the future, but right now, my minimal unobtrusive setup allowing the world access (a handful of worst ASN's that are all servers, not ISP's, managed challenged) works against this. Not a single country blocked or managed challenged. I am not a server tech, I use AI to help guide me through logical steps of identifying the issue and then stopping it in a way to not screw real people or legit bots around.

Just pages back in this discussion I was getting 800k / 1M daily uniques, below I have just over 100k daily now (real traffic), 85% of traffic was garbage. I listened to the advice given here about locking down the server to CF, did that, that fixed a large problem, thank you, but the biggest was /search/ and combined with proxycheck.io, they're just nailing their accuracy for blocking residential proxies. /search/ was the majority of load on my server that I was fighting.

(http.request.uri.path contains "/search/" and not http.cookie contains "xf_user=") on a managed challenge is priceless. That removed this massive load of one action having to search, keyword refinement, sorting and ranking results and worse if using a DB vs ES, all before the page can be built and returned. Whilst I use ES, which has already mapped every word to a document and surpasses DB lookup, still a load when compounded per search query by bots attacking it.

Oh... I also fixed my caching in CF, taking it from about 35% to now 60%+ average.

My server is otherwise open to the entire world beyond these minimal measures, so no ISP user is getting stuffed around accessing the site, no cranky users. Pages back I started in a bad place, nearly two months on, server is tight against this problem with no real concern to worry about anything as the server hums along nicely and doesn't cause issues to any site or user on it. Backups are the biggest spike typically in any given day.

My proxycheck account was running in the business plans at one point, which is when I had had enough, and ChatGPT and Claude were engaged to sort the issue out, without affecting users. I could give them a log file or snapshot to assess issues in seconds, and they did just that.

This most recent attack attempt, confirmed actions taken are correct. I was wondering how long they would keep at it, and todays result is back to 100k uniques (not shown below). 8 days and their software gave up / someone looked at a flagged issue and cancelled my site, moved on. This is the confirmation I needed that my server is now in a good place, my users aren't being affected by all this AI scrapers and other nonsense.

Oh, in CF I have every AI bot on allow, I am not blocking any legitimate bot, they just don't access it much.

My point is: This really is a fixable issue. This thread helped me fix my server. I don't have to daily manage ASN's or CIDR's.

Last edited: