You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

[XTR] AI Assistant [Paid] 1.0.17

No permission to buy ($45.00)

- Thread starter Osman

- Start date

Osman updated [XTR] AI Assistant with a new update entry:

1.0.13

Read the rest of this update entry...

1.0.13

- New Feature: Added "Reply to unanswered threads (24h)" option for Schedules. Bots can now target threads with zero replies older than 24 hours.

- New Feature: Added "Maximum Thread Age" setting for the unanswered thread logic. This prevents bots from necro-bumping very old threads.

- New Feature: Implemented a distinct reply limit for bot mentions and quotes. Direct interactions now have a separate limit from automated replies...

Read the rest of this update entry...

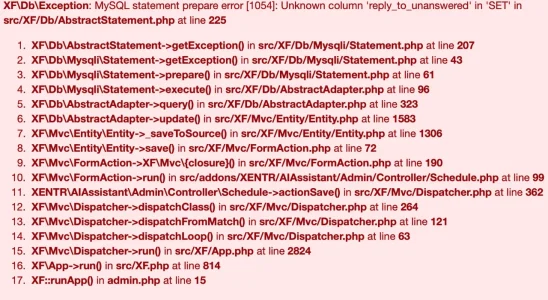

This issue has been resolved in version 1.0.14. Please update your add-on.@Osman After updating, the schedule cannot be saved.

Thank you, there are no more errors.This issue has been resolved in version 1.0.14. Please update your add-on.

What is the correct method to setup the schedule for 24hr posting? I ask because of "active hours", because if you want a bot to respond after 24hrs of no activity on a new post, if you set those hours then it posts between them, discarding the whole, post on new threads with no responses after 24hrs of no replies.

Osman updated [XTR] AI Assistant with a new update entry:

1.0.15

Read the rest of this update entry...

1.0.15

This update addresses a critical timing issue in the AI Assistant's "Reply to Unanswered Threads" feature.

- Fix: Fixed an issue where the "Reply to unanswered threads (24h)" option in scheduled tasks ignored the time limit.

- Improvement: The scheduler logic has been updated to strictly enforce the "Minimum Thread Age" criteria (default 24h).

What's Changed? In previous versions, even if the "Reply to unanswered threads (24h)" option was enabled in...

Read the rest of this update entry...

Yes. Works perfectly. Read reviews.

I think this would nearly be an entirely new addon, very different attack plan when editing, approvals, sending posts to moderation automatically, etc.@Osman, are there any plans for AI content moderation? For example, AI-powered thread editing and approval would be a necessity.

Members have pointed out an issue to me when replying with a quote. If you type out a reply, and use the AI suggestion to rewrite it, it will merge everything together into one post, including the quoted text.

The addon runs on your server, not like a previous developer did with their similar addon. Enter API key and away you go.Does your add-on run on my server completely or is there a part of its working system running on your server?

Word of warning to anyone hoping you can get the kind of results with this addon that you would get from having a conversation directly with an AI platform.

The AI part of this addon does not perform as well, by a long stretch, regardless of what model you choose in the settings.

I run a football forum and the bot can't accurately answer even basic football trivia questions. The answers it gives tend to be a couple of years old. I tried lowering the Temperature, I've tried numerous Models, nothing works, it just gives inaccurate answers.

I've paid for the addon and paid for the OpenRouter credits and had high hopes but honestly all that has happened is my forum users laughing at the AI bots answers and embarrassing me in the process for having introduced the new feature.

The Summarise thread tool is good, and you'll get good results if you use the knowledge base feature but you can't be expected to constantly fill the knowledge base with new trivia every day (because what's the point of having AI if you're doing the heavy lifting?) if you're looking for a good general AI bot that will chat and answer questions for your forum users, sadly this is not it.

The AI part of this addon does not perform as well, by a long stretch, regardless of what model you choose in the settings.

I run a football forum and the bot can't accurately answer even basic football trivia questions. The answers it gives tend to be a couple of years old. I tried lowering the Temperature, I've tried numerous Models, nothing works, it just gives inaccurate answers.

I've paid for the addon and paid for the OpenRouter credits and had high hopes but honestly all that has happened is my forum users laughing at the AI bots answers and embarrassing me in the process for having introduced the new feature.

The Summarise thread tool is good, and you'll get good results if you use the knowledge base feature but you can't be expected to constantly fill the knowledge base with new trivia every day (because what's the point of having AI if you're doing the heavy lifting?) if you're looking for a good general AI bot that will chat and answer questions for your forum users, sadly this is not it.

Have you tried Opus? In case of AI assistance, it’s worth looking into Opus which always gives the most accurate and most recent answers. I’ve built a few AI assistance apps for Invision Community and Opus is definitely the best of all models. But worth noting, also the most expensive one.Word of warning to anyone hoping you can get the kind of results with this addon that you would get from having a conversation directly with an AI platform.

The AI part of this addon does not perform as well, by a long stretch, regardless of what model you choose in the settings.

I run a football forum and the bot can't accurately answer even basic football trivia questions. The answers it gives tend to be a couple of years old. I tried lowering the Temperature, I've tried numerous Models, nothing works, it just gives inaccurate answers.

I've paid for the addon and paid for the OpenRouter credits and had high hopes but honestly all that has happened is my forum users laughing at the AI bots answers and embarrassing me in the process for having introduced the new feature.

The Summarise thread tool is good, and you'll get good results if you use the knowledge base feature but you can't be expected to constantly fill the knowledge base with new trivia every day (because what's the point of having AI if you're doing the heavy lifting?) if you're looking for a good general AI bot that will chat and answer questions for your forum users, sadly this is not it.

The fact is, it doesn't matter what model you use, this isn't real AIHave you tried Opus? In case of AI assistance, it’s worth looking into Opus which always gives the most accurate and most recent answers. I’ve built a few AI assistance apps for Invision Community and Opus is definitely the best of all models. But worth noting, also the most expensive one.

Did you not have to add an API key?The fact is, it doesn't matter what model you use, this isn't real AI

Hello,Does your add-on run on my server completely or is there a part of its working system running on your server?

Our add-on runs entirely on your own server (where your XenForo instance is hosted). There is absolutely no part of its working system that runs on our servers.

The add-on communicates directly with the official APIs of the AI providers you configure (such as OpenAI, Claude, Gemini, etc.) straight from your server. Since you use your own API keys, all data flow takes place strictly between your forum and the respective AI service provider. There are no intermediary servers (proxies) or data collection mechanisms involved on our end. You can use it with complete peace of mind.

Thank you very much for your feedback. Actually, our add-on is completely capable of recognizing users and handling this information natively; this feature was present during the development phase.@Osman this is a great addon and I'm having a good time setting it up and letting my users try it out.

I've found though, this addon is extremely limited by the fact it doesn't recognise the user it is responding to. Can this be changed?

However, we encountered a specific behavior with AI models (OpenAI, Claude, etc.): When we pass the conversational context to the AI in a format like [Osman wrote:] Hello, the AI models tend to perceive this as a strict 'response formatting rule' (mimicking/hallucination). As a result, the bots would start adding weird prefixes to their own generated responses, such as [John said:] or [Bot replying:], which completely breaks the natural flow of the post.

This is not a limitation or a missing feature of the add-on itself, but rather a known trait of current Large Language Models (LLMs) which tend to blindly copy the format of the context provided to them. To prevent these 'hallucinations' and ensure the bot responds as naturally as a real human, we chose to strip these explicit username tags and feed the raw message directly to the AI, which keeps the output clean and natural."

Hello, thank you for your feedback. I completely understand your frustration, but there is a fundamental misunderstanding here regarding the 'Knowledge Cutoff' of AI API models, rather than an issue with the add-on itself.Word of warning to anyone hoping you can get the kind of results with this addon that you would get from having a conversation directly with an AI platform.

The AI part of this addon does not perform as well, by a long stretch, regardless of what model you choose in the settings.

I run a football forum and the bot can't accurately answer even basic football trivia questions. The answers it gives tend to be a couple of years old. I tried lowering the Temperature, I've tried numerous Models, nothing works, it just gives inaccurate answers.

I've paid for the addon and paid for the OpenRouter credits and had high hopes but honestly all that has happened is my forum users laughing at the AI bots answers and embarrassing me in the process for having introduced the new feature.

The Summarise thread tool is good, and you'll get good results if you use the knowledge base feature but you can't be expected to constantly fill the knowledge base with new trivia every day (because what's the point of having AI if you're doing the heavy lifting?) if you're looking for a good general AI bot that will chat and answer questions for your forum users, sadly this is not it.

Our add-on does not degrade, restrict, or filter the quality of the AI's intelligence. It simply acts as a flawless bridge directly between the RAW API of the provider (like OpenRouter) and your forum.

The reason you get highly accurate and up-to-date football trivia on ChatGPT’s or Claude’s official websites is because their web interfaces are equipped with Live Web Browsing (Internet Search) capabilities running in the background.

However, when you communicate with these models via API (which is what you are doing through OpenRouter), the models do not have native internet access by default. They can only rely on their pre-trained data from when their training was finalized (e.g., late 2022 or mid-2023). The reason the bot gives answers that are 'a couple of years old' is not due to our add-on; it is simply the training cutoff date of the specific model you selected.

The Solution: Instead of using standard models (like LLaMA or older GPT versions via OpenRouter) for live trivia, you need to use models that natively support internet search grounding capabilities. For example, if you configure your add-on to use Perplexity models via API, it will natively search the internet in real-time and provide the exact high-quality, up-to-date answers your forum users expect."

Our add-on is not a localized piece of software with its own limited database or a fake rule-based chatbot.The fact is, it doesn't matter what model you use, this isn't real AI

Our system connects directly to the official APIs (the real AI cores) provided by the world's most advanced technology companies like OpenAI, Google, and Anthropic. The AI model you chat with on the ChatGPT website and the one you connect to via API through our add-on are the exact same intelligence architecture. The add-on does not interfere with, filter, or degrade the AI's intelligence; it merely serves as a pure transmission link between your forum and the AI server.

If you are receiving 'poor' or 'outdated' answers, it is absolutely not because the add-on is restricting the AI. It is entirely due to the training capacity or the knowledge cutoff date of the specific model you selected via OpenRouter (or any other provider).

If you configure the system with more advanced models (like GPT-4o, Claude 3.5 Sonnet) or models capable of live internet searching (like Perplexity), the quality and accuracy of the results you receive will be technically indistinguishable from what you get on the official ChatGPT or Claude web interfaces."