You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

[021] ChatGPT Autoresponder [Deleted]

- Thread starter 021

- Start date

021

Well-known member

Do you have any errors in the control panel?Do you mean the user group permissions? They have all been set.

021

Well-known member

Then there are only two options: either the option to receive replies from AI is disabled in the threads, or the permissions are still not configuredNone whatsoever. Really clueless.

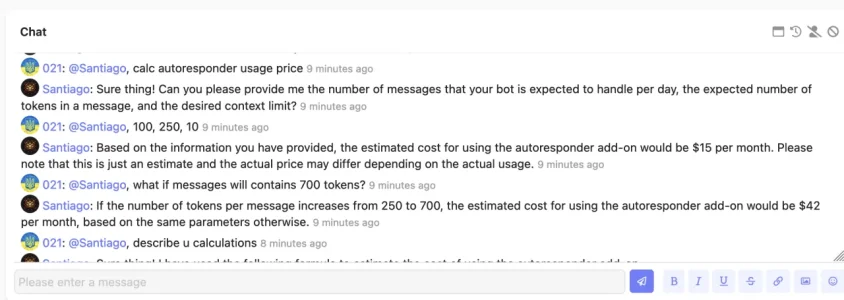

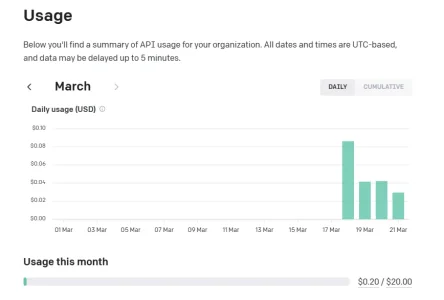

my users have been using it all day yesterday (although it is the day of least activity on my page) and they have only spent so far 0.10 USDAny idea what a typical Q&A costs for the api use? I'm thinking of putting this on our forum, in a dedicated area, but not if it is going to become a huge expense.

It looks like some really good coding on the XF side.

I know that the new addon that is in beta has this function , but I need to create a prompt to the AI , such as creating rules for it, it would be so good to customize it to my forum.

Can you not upgrade without resetting all the permissions. IIt's a right PITA to have to redo them all.

my users have been using it all day yesterday (although it is the day of least activity on my page) and they have only spent so far 0.10 USD

I assume your API is using gpt-3.5-turbo and not GPT-4?

021

Well-known member

The update does not reset permissions. Permissions were only added in version 1.3.0, which is why you need to configure them after this update.Can you not upgrade without resetting all the permissions. IIt's a right PITA to have to redo them all.

no, chatgpt plus is for openai web. What this plugin uses is the API, they are two different thingsShould I upgrade to chatGPT plus? Any real advantages?

Last edited:

no, gpt plus chat is for openai web. What this plugin uses is the API, they are two different things

Thanks!!!

I'm expecting tremendous novelty usage from communities in the beginning. I'm curious what that engagement will look like in 1-2 years, and how communities with embedded AI bots will interact with the bots.I got this mod working roughly two hours ago. Members started figuring out how to play with it about one hour ago. Already 93 posts in the bot playpen and it's really just getting started. Fun stuff.

@021 - Would it be possible to add a configuration option for this mod consisting of an optional text box where we could define some text that would be injected with each request to ChatGPT (ie. appended to any post that is sent)?

ChatGPT can format it's responses with BB Code if you ask it to, but asking to do that in every post is repetitive and not fun. If there were a text box in the options page for the mod where we could define text strings like:

please format your response with BB Code as appropriate

or

please include citations and format with BB Code as appropriate

that would be appended to every API request, that would be awesome!

ChatGPT can format it's responses with BB Code if you ask it to, but asking to do that in every post is repetitive and not fun. If there were a text box in the options page for the mod where we could define text strings like:

please format your response with BB Code as appropriate

or

please include citations and format with BB Code as appropriate

that would be appended to every API request, that would be awesome!

021

Well-known member

Hello. Yes, this functionality is planned for future updates.Would it be possible to add a configuration option for this mod consisting of an optional text box where we could define some text that would be injected with each request to ChatGPT (ie. appended to any post that is sent)?

Yes, the gpt-3.5-turbo model has a limit of 4096 tokens per request, you can read more about this model here:Question - does the mod, or the API, have a limit on the size of a query? Ie. how many characters can a member type into a post that ChatGPT will respond to?

You can count the number of tokens in a text here:

You can also read more information about API rate limits here:

Similar threads

- Replies

- 1

- Views

- 458

- Replies

- 7

- Views

- 766